Residual Sum of Squares: Definition, Formula & Calculation

Master RSS in regression analysis: Learn definitions, formulas, calculations, and practical applications.

Understanding Residual Sum of Squares

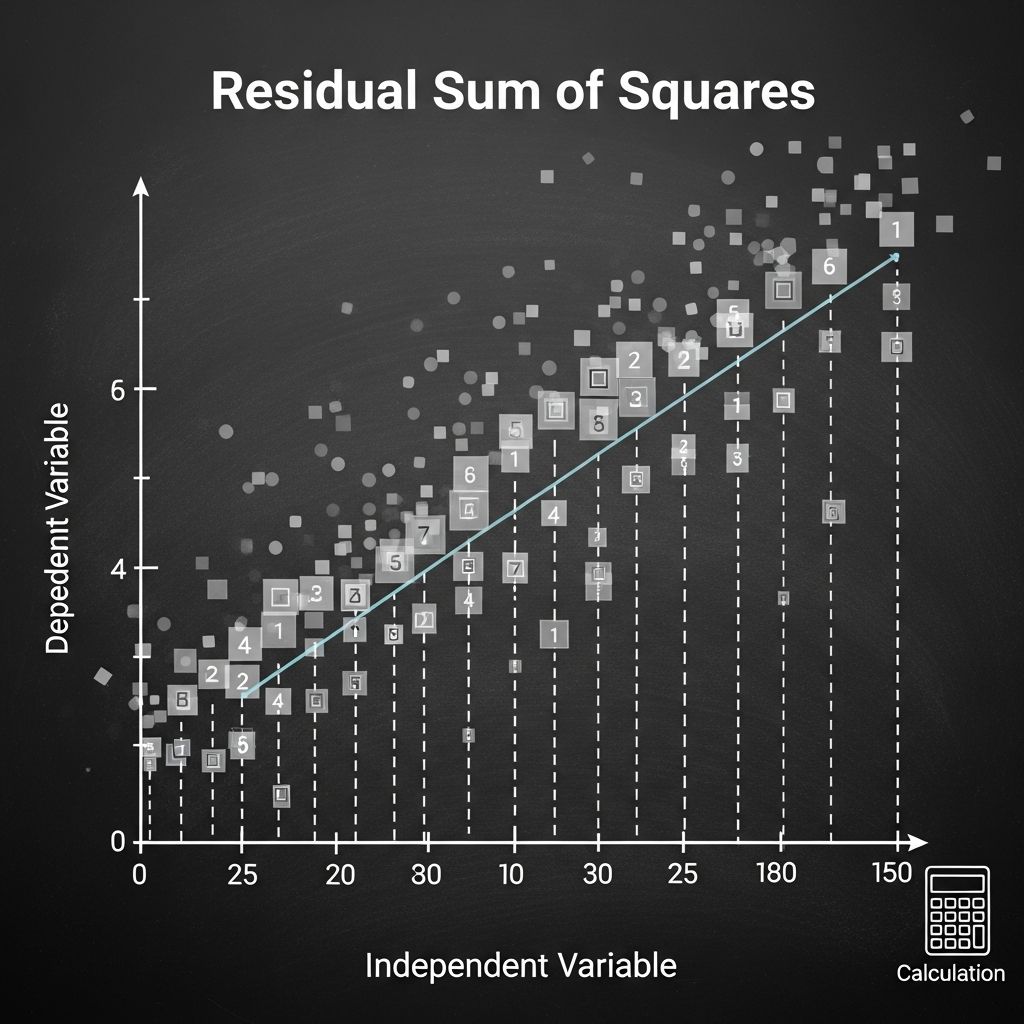

The Residual Sum of Squares (RSS), also known as the sum of squared residuals or sum of squared errors (SSE), is a fundamental statistical measure used in regression analysis to quantify the unexplained variation in data. It represents the discrepancy between observed values and the values predicted by a regression model. In essence, RSS measures how much error remains after fitting a model to data, making it an essential metric for evaluating model performance and accuracy.

The concept of RSS is rooted in the principle of ordinary least squares (OLS) regression, which seeks to minimize the sum of squared differences between actual and predicted values. Understanding RSS is crucial for statisticians, data scientists, financial analysts, and researchers who rely on regression models to draw meaningful insights from data.

What Does Residual Sum of Squares Measure?

The residual sum of squares measures the total amount of error in a regression model that cannot be explained by the independent variables. Each residual represents the vertical distance between an observed data point and the corresponding point on the fitted regression line. By squaring these residuals and summing them, RSS provides a single numeric value that indicates overall model fit quality.

A critical principle to remember is that smaller RSS values indicate better model fit, while larger RSS values suggest that the model does not adequately capture the relationship between variables. This inverse relationship makes RSS a valuable diagnostic tool for model comparison and selection.

The Residual Sum of Squares Formula

The formula for calculating the Residual Sum of Squares is straightforward:

RSS = Σ(yᵢ – ŷᵢ)²

Where:

- yᵢ represents the observed (actual) value of the dependent variable for the i-th observation

- ŷᵢ represents the predicted value from the regression model for the i-th observation

- Σ denotes the summation symbol, indicating we sum across all n observations

- n represents the total number of observations in the dataset

The difference (yᵢ – ŷᵢ) is called the residual or error term. By squaring each residual, we ensure that positive and negative deviations contribute equally to the total, and larger deviations have proportionally greater impact on the RSS value.

The Three Components of Sum of Squares

In regression analysis, the total variation in the dependent variable can be decomposed into three distinct components, each with specific meaning and calculation method:

Total Sum of Squares (TSS)

The Total Sum of Squares measures the total variation in the dependent variable from its mean value. It represents the overall dispersion of the data before fitting any model. TSS is calculated as:

TSS = Σ(yᵢ – ȳ)²

where ȳ is the mean of the dependent variable.

Explained Sum of Squares (ESS) or Sum of Squares Due to Regression (SSR)

The Explained Sum of Squares quantifies the variation in the dependent variable that is explained by the regression model. A higher ESS indicates that the model explains more of the variation in the data. ESS is calculated as:

ESS = Σ(ŷᵢ – ȳ)²

Residual Sum of Squares (RSS)

As discussed, RSS measures the unexplained variation—the portion of total variation that the model cannot account for.

The Fundamental Relationship: TSS = ESS + RSS

These three components are related through a fundamental equation in regression analysis:

TSS = ESS + RSS

This equation demonstrates that the total variation in the dependent variable is partitioned into two parts: the variation explained by the model (ESS) and the variation not explained by the model (RSS). This relationship holds true for all ordinary least squares regression models and provides a basis for calculating the coefficient of determination (R²), which is defined as:

R² = ESS / TSS = 1 – (RSS / TSS)

The R² value ranges from 0 to 1, where a value closer to 1 indicates that the model explains most of the variation in the dependent variable, while a value closer to 0 suggests poor explanatory power.

Step-by-Step Calculation of Residual Sum of Squares

Calculating RSS involves a systematic approach that can be broken down into clear steps:

Step 1: Gather and Organize Data

Collect the actual values of your dependent variable (y) and any independent variables (x). Organize this data in a structured format, such as a spreadsheet or data frame, for easy reference and calculation.

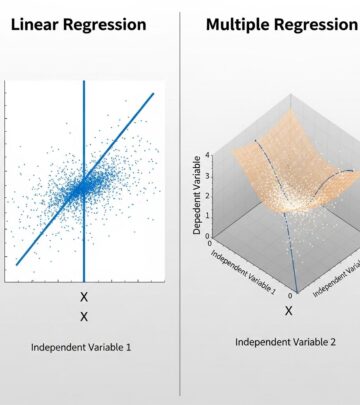

Step 2: Fit a Regression Model

Using your data, fit an appropriate regression model (linear, polynomial, multiple regression, etc.) to estimate the coefficients of your model. This produces predicted values (ŷᵢ) for each observation.

Step 3: Calculate Residuals

For each observation, calculate the residual by subtracting the predicted value from the observed value: residual = yᵢ – ŷᵢ

Step 4: Square Each Residual

Square each residual value to ensure all deviations contribute positively to the total: (yᵢ – ŷᵢ)²

Step 5: Sum All Squared Residuals

Add together all the squared residuals to obtain the final RSS value. This sum represents the total unexplained variation in your model.

Practical Example: Retail Sales Forecasting

Consider a retail business using linear regression to predict monthly sales based on advertising expenditure. Suppose the model produces the following results for five months:

| Month | Actual Sales ($) | Predicted Sales ($) | Residual | Squared Residual |

|---|---|---|---|---|

| 1 | 10,000 | 9,800 | 200 | 40,000 |

| 2 | 12,500 | 12,300 | 200 | 40,000 |

| 3 | 11,000 | 11,500 | -500 | 250,000 |

| 4 | 13,000 | 12,800 | 200 | 40,000 |

| 5 | 14,500 | 14,600 | -100 | 10,000 |

RSS = 40,000 + 40,000 + 250,000 + 40,000 + 10,000 = 380,000

This RSS value indicates the total squared deviation of actual sales from predicted values. The business can use this metric to compare different models and select the one with the lowest RSS for more accurate sales forecasting.

Applications of Residual Sum of Squares

RSS has numerous applications across different fields and industries:

Model Selection and Comparison

When comparing multiple regression models for the same dataset, analysts typically select the model with the lowest RSS, as it indicates the best fit to the data. This approach is particularly useful when deciding between competing theoretical models or different specifications of the same model.

Financial Analysis and Risk Assessment

In finance, RSS is used to evaluate the fit of asset pricing models, such as the Capital Asset Pricing Model (CAPM), and to assess forecast accuracy in financial projections and valuations.

Time Series Forecasting

RSS is critical in time series analysis for applications such as stock price prediction, sales forecasting, demand planning, and economic indicator forecasting. Lower RSS values indicate more reliable forecasts.

Quality Control and Process Optimization

Manufacturing and quality assurance teams use RSS to evaluate models that predict product quality metrics and to identify process improvements.

Real Estate Valuation

RSS helps real estate analysts build and validate models for property price prediction based on features such as location, size, age, and condition.

Interpreting and Improving RSS

While RSS itself provides absolute values of unexplained variation, its interpretation often requires context. Comparing RSS values across models with the same dependent variable can be meaningful, but comparing RSS values between different datasets or different dependent variables requires standardization through metrics like adjusted R² or the root mean square error (RMSE).

To improve RSS and achieve better model fit, analysts may:

- Include additional relevant independent variables

- Transform variables to better capture nonlinear relationships

- Remove outliers that disproportionately influence the model

- Consider interaction terms between variables

- Use more sophisticated modeling techniques such as polynomial regression or machine learning methods

- Verify that model assumptions (linearity, homoscedasticity, normality of residuals) are satisfied

Limitations and Considerations

While RSS is a valuable metric, it has several limitations worth noting. First, RSS increases with the number of observations, making direct comparison between datasets of different sizes problematic. Second, RSS does not account for model complexity; more complex models with additional parameters naturally tend to have lower RSS values even if they overfit the data. Third, RSS is sensitive to outliers, which can substantially inflate its value and lead to misleading conclusions about model fit.

To address these limitations, statisticians often employ adjusted R², Akaike Information Criterion (AIC), or Bayesian Information Criterion (BIC), which penalize model complexity and provide more balanced assessments of model quality.

Frequently Asked Questions

Q: What is the difference between RSS, SSE, and residuals?

A: Residuals are the individual differences between observed and predicted values. SSE (Sum of Squared Errors) and RSS (Residual Sum of Squares) are synonymous terms that both refer to the sum of squared residuals. Some literature uses these terms interchangeably, though RSS is more common in academic settings while SSE appears more frequently in applied contexts.

Q: Why do we square the residuals in the RSS calculation?

A: Squaring residuals serves multiple purposes: it makes all errors positive (eliminating sign cancellation), gives greater weight to larger deviations, and aligns with statistical theory for ordinary least squares estimation, which minimizes the sum of squared errors.

Q: Can RSS be negative?

A: No, RSS cannot be negative. Since each term in the sum is a squared value, RSS is always non-negative. The minimum possible value is zero, which occurs only when the model perfectly predicts all observed values.

Q: How is RSS used in model validation?

A: RSS is used to assess how well a model trained on one dataset performs. Lower RSS on validation or test data indicates good generalization and predictive accuracy. Comparing RSS between training and test data can help identify overfitting.

Q: Is lower RSS always better?

A: Generally yes, lower RSS indicates better model fit. However, extremely low RSS on training data combined with high RSS on test data suggests overfitting. The best model balances low training RSS with good generalization performance and reasonable complexity.

References

- Residual Sum of Squares — GeeksforGeeks. 2024-11-07. https://www.geeksforgeeks.org/maths/residual-sum-of-squares/

- Sum of Squares – Definition, Formulas, Regression Analysis — Corporate Finance Institute. https://corporatefinanceinstitute.com/resources/data-science/sum-of-squares/

- Explained sum of squares — Wikipedia. https://en.wikipedia.org/wiki/Explained_sum_of_squares

- Python residual sum of squares: Tutorial & Examples — Ikigai Labs. https://www.ikigailabs.io/multivariate-time-series-forecasting-in-python-settings/python-residual-sum-of-squares

- Sum of Squares: SST, SSR, SSE — 365 Data Science. https://365datascience.com/tutorials/statistics-tutorials/sum-squares/

Read full bio of medha deb