Regression: Statistical Method for Predicting Outcomes

Master regression analysis: A comprehensive guide to predictive modeling and data relationships.

What is Regression?

Regression is a statistical method used to model the relationship between variables and predict future outcomes based on historical data. In the context of finance and investing, regression analysis helps analysts understand how different factors influence asset prices, returns, and other financial metrics. The technique establishes a mathematical equation that represents the relationship between one dependent variable (the outcome being predicted) and one or more independent variables (the factors influencing that outcome).

The fundamental principle behind regression is that many real-world phenomena exhibit relationships that can be quantified and measured. By identifying these relationships, investors, analysts, and researchers can make more informed decisions and develop more accurate forecasting models. Regression analysis is widely used across multiple disciplines, including finance, economics, biology, social sciences, and engineering.

How Does Regression Analysis Work?

Regression analysis operates by fitting a mathematical function to observed data points. The process involves several key steps:

- Data Collection: Gather historical data on the dependent variable and relevant independent variables.

- Model Specification: Choose an appropriate regression model based on the nature of the data and research question.

- Parameter Estimation: Calculate the coefficients that define the relationship between variables.

- Model Validation: Test the model’s accuracy and reliability using statistical measures.

- Forecasting: Use the validated model to predict future outcomes.

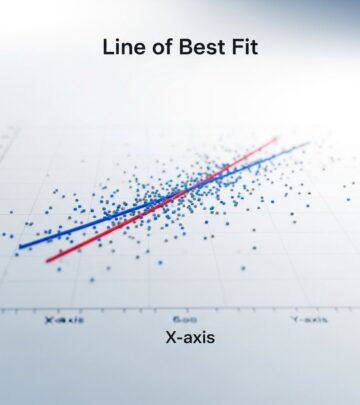

The most common approach is ordinary least squares (OLS) regression, which minimizes the sum of squared differences between observed values and predicted values. This method finds the best-fitting line through a dataset by reducing prediction errors to the lowest possible level.

Types of Regression Analysis

Linear Regression

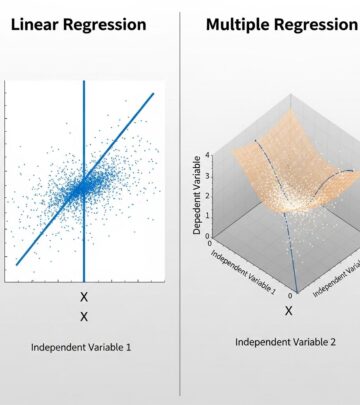

Linear regression is the most straightforward form of regression analysis, assuming a straight-line relationship between variables. In simple linear regression, one independent variable predicts a dependent variable. For example, an analyst might model how stock returns (dependent variable) relate to market index changes (independent variable). The regression equation takes the form: Y = a + bX, where Y is the predicted outcome, X is the independent variable, a is the intercept, and b is the slope coefficient.

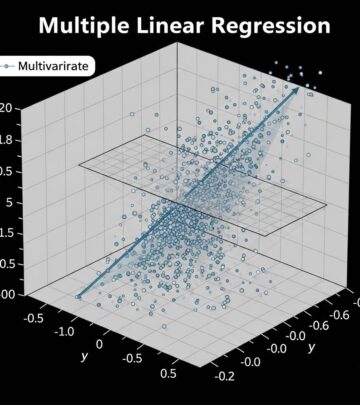

Multiple linear regression extends this concept by incorporating several independent variables simultaneously, allowing analysts to model more complex relationships. This approach is particularly valuable in finance for understanding how multiple economic factors, company metrics, or market conditions collectively influence asset performance.

Logistic Regression

Logistic regression is used when the dependent variable is categorical or binary (e.g., default/no default, buy/sell, success/failure). Rather than predicting continuous values, logistic regression estimates the probability of an outcome occurring. Financial institutions frequently use logistic regression for credit risk assessment, predicting loan defaults, and determining credit scores. The model produces probability outputs between 0 and 1, making it ideal for classification problems.

Polynomial Regression

Polynomial regression accommodates curved, non-linear relationships between variables by using higher-order terms (squared, cubed, etc.). This approach captures more complex patterns that simple linear relationships cannot explain. For instance, the relationship between investment risk and returns may not be strictly linear, and polynomial regression can model such nuanced relationships more accurately.

Ridge and Lasso Regression

Ridge and Lasso regression are advanced techniques that address overfitting issues in complex models with many variables. Ridge regression adds a penalty term to the regression coefficients, reducing their magnitude and improving model stability. Lasso regression performs variable selection by forcing some coefficients to zero, effectively eliminating less important variables. These methods are particularly useful when working with large datasets containing many potential predictors.

Key Components of Regression Analysis

Dependent and Independent Variables

The dependent variable is what you aim to predict or explain (also called the outcome or response variable). The independent variables are the factors hypothesized to influence the dependent variable (also called predictors or explanatory variables). In financial analysis, stock price might serve as the dependent variable, with earnings per share, company debt levels, and market sentiment as independent variables.

Regression Coefficients

Regression coefficients quantify the relationship between independent and dependent variables. The coefficient’s magnitude indicates the strength of influence, while its sign (positive or negative) indicates the direction of the relationship. Statistical significance testing determines whether observed relationships likely reflect genuine patterns or random variation.

R-Squared (Coefficient of Determination)

R-squared measures the proportion of variance in the dependent variable explained by the independent variables. Values range from 0 to 1, with higher values indicating better model fit. An R-squared of 0.85 means the model explains 85% of the variability in the outcome. However, high R-squared alone doesn’t guarantee a good model—residual analysis and other diagnostics are equally important.

Standard Error and Confidence Intervals

The standard error measures the average distance between observed and predicted values, indicating prediction precision. Confidence intervals provide ranges around predictions, acknowledging inherent uncertainty. These measures help analysts assess how much confidence to place in specific predictions.

Applications in Finance and Investing

Regression analysis serves numerous practical applications in the financial sector:

| Application | Purpose | Example |

|---|---|---|

| Portfolio Risk Assessment | Understanding how individual assets contribute to portfolio risk | Beta calculation measuring stock volatility relative to market index |

| Valuation Models | Estimating fair value for stocks and other assets | Price-to-earnings regression predicting stock prices |

| Credit Risk Analysis | Assessing default probability and loan quality | Logistic regression identifying high-risk borrowers |

| Economic Forecasting | Predicting economic indicators and market trends | Inflation regression based on money supply and employment |

| Performance Attribution | Analyzing factors driving investment returns | Factor regression decomposing fund performance |

Advantages of Regression Analysis

- Predictive Power: Enables forecasting of future outcomes based on historical patterns and relationships.

- Relationship Quantification: Translates complex relationships into interpretable numerical terms.

- Multiple Factor Analysis: Simultaneously evaluates the influence of multiple variables.

- Statistical Rigor: Provides confidence measures and significance testing for findings.

- Flexibility: Adapts to various data types and relationship patterns through different regression approaches.

- Computational Efficiency: Relatively quick to implement and interpret compared to complex machine learning algorithms.

Limitations and Challenges

Despite its widespread use, regression analysis has notable limitations. Correlation vs. Causation: regression identifies relationships but doesn’t prove causation. A strong relationship between two variables doesn’t mean one causes the other; both might be influenced by a third factor. Assumption Violations: Regression assumes linearity, normal distribution of errors, and constant variance—violations can compromise results. Outliers and Influential Points: Extreme values can disproportionately affect regression coefficients and predictions. Multicollinearity: When independent variables are highly correlated with each other, coefficient estimates become unstable and unreliable. Overfitting: Complex models with many variables may fit historical data well but perform poorly on new data.

Common Mistakes in Regression Analysis

Analysts frequently make errors that compromise regression analysis validity. Including too many variables without theoretical justification leads to overfitting. Ignoring assumptions about data distribution and error structure produces unreliable results. Failing to examine residuals for patterns suggests potential model misspecification. Using regression on non-stationary financial time series without proper transformation can yield spurious relationships. Additionally, extrapolating predictions far beyond historical data ranges increases error risk significantly.

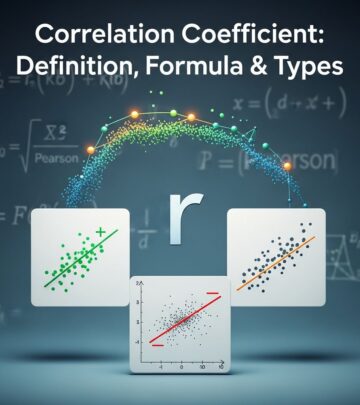

Regression vs. Correlation

While related, regression and correlation serve different purposes. Correlation measures the strength and direction of a linear relationship between two variables, ranging from -1 to +1. Regression goes further by establishing a predictive model and enabling outcome forecasting. Correlation simply describes association; regression explains and predicts. In practice, analysts often use both—correlation analysis for initial exploration and regression for deeper modeling and forecasting applications.

Interpreting Regression Results

Proper interpretation requires understanding several key outputs. The regression coefficient indicates how much the dependent variable changes for a one-unit increase in an independent variable, holding other factors constant. The p-value indicates statistical significance—values below 0.05 typically suggest results unlikely to occur by chance. The F-statistic tests whether the overall model is statistically significant. Examining residual plots helps identify potential model problems and assumption violations. Analysts should never rely on a single metric; comprehensive diagnosis requires multiple analytical perspectives.

Frequently Asked Questions About Regression

Q: What is the difference between simple and multiple regression?

A: Simple regression uses one independent variable to predict the dependent variable, while multiple regression incorporates several independent variables simultaneously. Multiple regression typically provides more accurate predictions by accounting for multiple influencing factors.

Q: How do I know if my regression model is good?

A: Evaluate multiple criteria: R-squared indicates explanatory power, residual plots reveal assumption violations, statistical significance tests validate relationships, and out-of-sample testing confirms prediction accuracy. A good model balances simplicity with explanatory power.

Q: Can regression be used for time series forecasting?

A: Yes, but with caution. Regression works for time series, but financial time series often violate regression assumptions (autocorrelation, non-stationarity). Specialized approaches like ARIMA or vector autoregression often perform better for time series data.

Q: What does a negative regression coefficient mean?

A: A negative coefficient indicates an inverse relationship—as the independent variable increases, the dependent variable tends to decrease. For example, a negative coefficient on interest rates in a stock price regression suggests higher rates correlate with lower stock prices.

Q: How many observations do I need for reliable regression results?

A: While no fixed rule applies, most statisticians recommend at least 10-20 observations per independent variable. With fewer observations, coefficient estimates become unstable and less reliable. Larger sample sizes strengthen statistical power and result confidence.

Q: Is regression affected by data outliers?

A: Yes, outliers significantly affect regression results, especially with small sample sizes. They can distort coefficients and inflate standard errors. Identifying and appropriately handling outliers through robust regression methods or careful analysis improves model reliability.

Conclusion

Regression analysis remains one of the most powerful and widely-used statistical tools in finance, investing, and research. By quantifying relationships between variables, it enables predictions, risk assessment, and informed decision-making. From simple linear models to advanced machine learning applications, regression provides the foundation for understanding how different factors influence outcomes. While regression has limitations and requires careful application, proper implementation and interpretation make it invaluable for analysts seeking to navigate complex financial landscapes and make evidence-based decisions. Understanding regression fundamentals empowers professionals across industries to extract meaningful insights from data and build robust predictive models.

References

- Ordinary Least Squares Regression — MIT OpenCourseWare, Massachusetts Institute of Technology. Accessed 2024. https://ocw.mit.edu/courses/sloan-school-of-management/15-075j-statistical-thinking-and-data-analysis-fall-2011/

- Introduction to Statistical Learning — James, G., Witten, D., Hastie, T., & Tibshirani, R. Springer. 2013. https://www.statlearning.com/

- Regression Analysis: A Constructive Critique — Federal Reserve Board of Governors, Division of Research and Statistics. 2023. https://www.federalreserve.gov/

- Applications of Regression in Financial Markets — CFA Institute. 2023. https://www.cfainstitute.org/

- Statistical Methods for Financial Analysis — U.S. Securities and Exchange Commission, Office of Investor Education and Advocacy. 2022. https://www.sec.gov/investor/

Read full bio of Sneha Tete