R-Squared: Measure Statistical Model Fit

Understanding R-squared: A comprehensive guide to measuring regression model performance.

Understanding R-Squared: A Comprehensive Guide to Statistical Model Fit

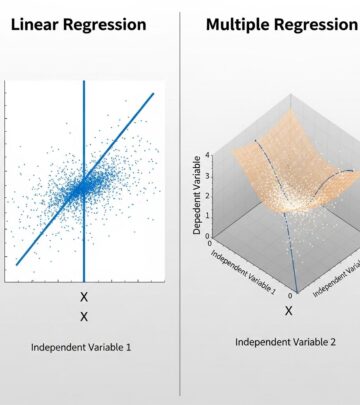

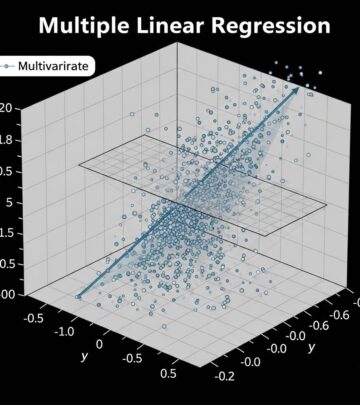

R-squared, denoted as R² or r², represents a fundamental statistical measure used to evaluate the performance of regression models across various disciplines. Also known as the coefficient of determination, R-squared quantifies the proportion of variation in a dependent variable that can be explained by independent variable(s) in a predictive model. This metric has become essential for researchers, analysts, and data scientists who need to assess how well their statistical models fit observed data.

The importance of R-squared lies in its ability to provide a standardized measure for comparing model performance. By offering a single numerical value between 0 and 1 (or 0% to 100%), R-squared enables quick evaluation of whether a regression model captures the underlying patterns in data effectively. Understanding this metric is crucial for anyone working with quantitative analysis, from financial forecasting to clinical research.

What Is R-Squared?

R-squared is a statistical measure that indicates how well independent variables explain the variation in a dependent variable within a regression model. The value ranges from 0.00 to 1.00, where 0.00 means the model explains none of the variability in the outcome, and 1.00 indicates a perfect fit where the model explains all variability in the dependent variable.

When R² = 0.49, for example, this indicates that 49% of the variability in the dependent variable has been accounted for by the model, with the remaining 51% remaining unexplained. The higher the R-squared value, the more successful the regression model is at capturing the relationship between variables.

How R-Squared Is Calculated

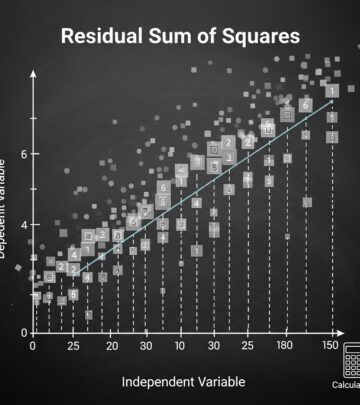

R-squared can be calculated using several key statistical components. The primary method involves three main elements:

- Mean of observed Y values (Y’) — The average of all dependent variable observations

- Total Sum of Squares (TSS) — The total variation in the dependent variable

- Residual Sum of Squares (RSS) — The variation not explained by the model

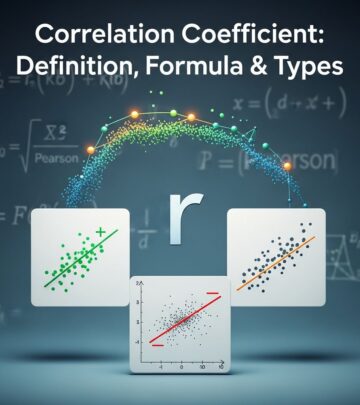

The fundamental formula for R-squared expresses the proportion of total variance explained by the regression model. In simple linear regression with an intercept, R² equals the square of the Pearson correlation coefficient between observed outcomes and predicted values. For multiple regression models, R² represents the square of the coefficient of multiple correlation.

This calculation methodology ensures that R-squared values are comparable across different studies and datasets, making it a universal tool for model evaluation.

Interpreting R-Squared Values

Understanding the Scale

Interpreting R-squared requires understanding what different ranges signify. When R² is closer to 1.0, more of the fluctuation in the dependent variable is due to changes in the predictor variables, meaning the independent variables explain more variation in the outcome. Conversely, as R² approaches 0.0, it indicates a weaker relationship between independent and dependent variables, suggesting the model’s predictions are not well-aligned with actual data points.

Context-Dependent Standards

What constitutes a “good” R-squared value varies significantly across different fields and disciplines. Standards differ because various fields face different challenges in data collection and analysis:

| Field | Acceptable R-Squared Range | Explanation |

|---|---|---|

| Social Sciences & Psychology | 0.10 to 0.30 | Lower values accepted due to inherent complexity of human behavior |

| Finance | 0.40 to 0.70 | Moderate range depending on analysis type and data availability |

| Physical Sciences & Engineering | 0.70 and above | Higher values expected due to more controlled experimental conditions |

| Physics & Chemistry | 0.70 to 0.99 | Generally high values needed for model validation |

| Clinical Medicine | 0.15 to 0.20 | Lower benchmarks appropriate for complex biological systems |

Clinical Medicine Considerations

In clinical research specifically, an R-squared value of 0.15-0.20 (15-20%) is considered a suitable benchmark, with appropriate caveats. This relatively lower threshold reflects the inherent complexity of clinical outcomes, which are influenced by numerous unpredictable biological and environmental factors. The appropriateness of any R-squared benchmark varies depending on the number and influence of factors contributing to the outcomes under investigation.

Limitations of R-Squared

Overfitting Concerns

One critical limitation of R-squared is its vulnerability to overfitting. Overfitting occurs when a model becomes too complex and captures noise rather than true patterns. This can happen in two primary scenarios:

- The model is trained on a dataset that does not resemble the general population, resulting in poor performance when applied to new data

- The model contains more independent variables than data points, allowing each variable to be artificially “assigned” to a data point, creating an unrealistic perfect fit

What R-Squared Doesn’t Tell You

It is important to understand that no R² value tells you definitively whether a model is good or not; rather, it tells you how well the model fits the specific data you have. R-squared alone cannot determine causation, nor can it identify whether important variables have been omitted from the model.

Field-Specific Variability

R-squared benchmarks vary widely by the particular research question being addressed. The most accurate benchmark will always be achieved through comparison with existing literature on the specific research question under investigation. Different studies may require specific R-squared thresholds to accurately gauge model performance and predictive capacity.

R-Squared vs. Adjusted R-Squared

To address some limitations of standard R-squared, statisticians developed adjusted R-squared. The adjusted R² can be interpreted as an instance of the bias-variance tradeoff. When a model becomes more complex by adding more variables, the variance increases while the square of bias decreases.

Adjusted R-squared penalizes the model for including unnecessary variables, providing a more realistic assessment of model performance on new data. This makes adjusted R-squared particularly valuable when comparing models with different numbers of independent variables.

Practical Applications Across Industries

Finance and Investment Analysis

In financial markets, R-squared helps investors and analysts determine how much of a fund’s or stock’s movement can be explained by a benchmark index. An R² of 0.85 in this context suggests the security moves in line with its index 85% of the time, with 15% of movement attributable to fund-specific factors.

Medical Research

Clinical researchers use R-squared to evaluate predictive models for patient outcomes. Given the multifactorial nature of disease, lower R² values are expected and acceptable. Researchers complement R-squared analysis with other relevant statistical tests and validation techniques for robust results.

Engineering and Physical Sciences

Engineers typically require higher R² values because physical systems can be more precisely measured and controlled. A manufacturing process model with R² = 0.92 indicates excellent predictability and reliability.

Calculating and Improving R-Squared

To calculate R-squared from raw data, practitioners typically use statistical software that automates the computation process. However, understanding the underlying calculation strengthens interpretation skills.

To improve R-squared values:

- Include relevant independent variables that have theoretical justification

- Check for data quality issues and outliers that may skew results

- Consider non-linear relationships or interaction terms between variables

- Ensure adequate sample size relative to the number of variables

- Validate findings on independent test datasets

The Role of R-Squared in Model Selection

When choosing between competing regression models, R-squared serves as one evaluation criterion among several. However, researchers should not rely on R-squared alone. A model with high R² but poor performance on new data suggests overfitting has occurred. Conversely, a slightly lower R² combined with strong theoretical support and good out-of-sample performance may indicate a more robust model.

Cross-validation techniques, residual analysis, and domain expertise should complement R-squared evaluation in model selection processes.

Frequently Asked Questions

Q: What does an R-squared value of 0.5 mean?

A: An R² of 0.5 indicates that 50% of the variation in the dependent variable is explained by the independent variables in the model, while 50% remains unexplained. Whether this is acceptable depends on your field and research context.

Q: Can R-squared be negative?

A: While R² typically ranges from 0 to 1, it can theoretically be negative in certain circumstances involving non-linear models or when the model performs worse than a simple horizontal line (mean prediction).

Q: Is a higher R-squared always better?

A: Not necessarily. An artificially high R² may indicate overfitting, where the model has learned noise rather than true patterns. A moderately high R² with good theoretical justification often outperforms a very high R² model that fails on new data.

Q: How does R-squared relate to correlation?

A: In simple linear regression, R² is the square of the correlation coefficient. If the correlation coefficient (r) is 0.7, then R² would be 0.49, meaning 49% of variance is explained.

Q: Should I always report R-squared in my research?

A: R² is a valuable metric to report alongside other statistics. However, complement it with additional validation techniques, effect sizes, and confidence intervals for comprehensive model evaluation.

Q: How many variables should I include to maximize R-squared?

A: Include variables with theoretical justification rather than maximizing R² by adding unnecessary variables. Use adjusted R² or information criteria like AIC to balance model complexity with explanatory power.

Conclusion

R-squared remains an indispensable tool for evaluating regression model performance across diverse fields. By quantifying the proportion of variance explained by independent variables, R² provides a standardized metric for model assessment and comparison. However, practitioners must understand its limitations and interpret it within appropriate disciplinary contexts.

The most effective approach to model evaluation combines R² with other statistical measures, domain expertise, and validation techniques. Researchers should always compare their R² values with established benchmarks in their field and consider whether their model generalizes well to new data. By using R-squared as one component of comprehensive statistical analysis rather than as a standalone measure, analysts can make more informed decisions about model quality and reliability.

References

- Determining a Meaningful R-squared Value in Clinical Medicine — Scholastica HQ. 2025. https://academic-med-surg.scholasticahq.com/article/125154

- Coefficient of Determination — Wikipedia. Retrieved November 2025. https://en.wikipedia.org/wiki/Coefficient_of_determination

Read full bio of medha deb