Linear vs Multiple Regression: Key Differences

Understand the critical differences between linear and multiple regression analysis methods.

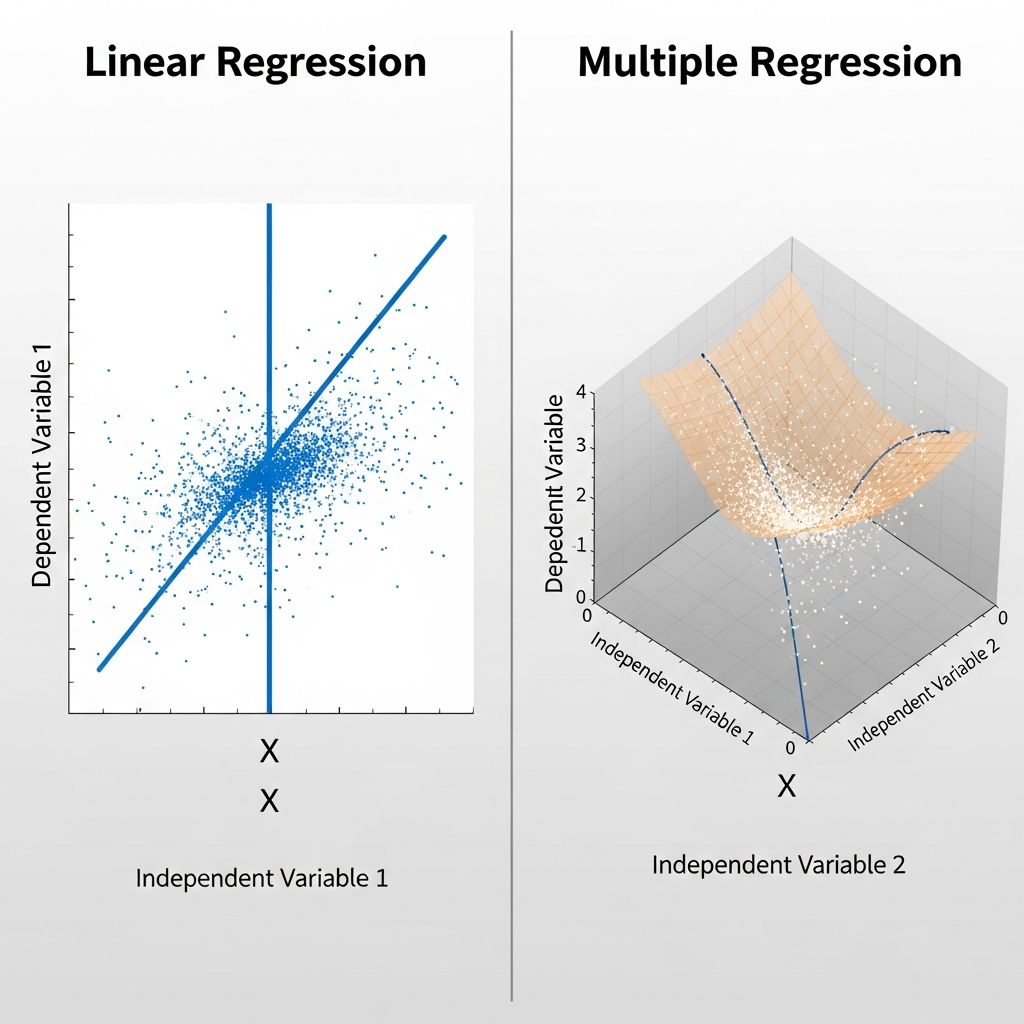

Understanding Linear Regression and Multiple Regression

Regression analysis is a fundamental statistical technique used in finance, economics, and data science to understand and predict relationships between variables. Two primary forms of regression analysis—simple linear regression and multiple regression—are widely used across various industries to model these relationships. While both techniques share common objectives, they differ significantly in their structure, complexity, and applications. Understanding these differences is crucial for selecting the appropriate analytical method for your specific research questions or business problems.

The primary purpose of any regression analysis is twofold: first, to measure the influence of one or more variables on another variable, and second, to predict a variable based on one or more other variables. Both simple and multiple linear regression serve these purposes, but they do so with different levels of sophistication and capability. As datasets become more complex and multi-dimensional, the choice between these methods becomes increasingly important.

Simple Linear Regression Explained

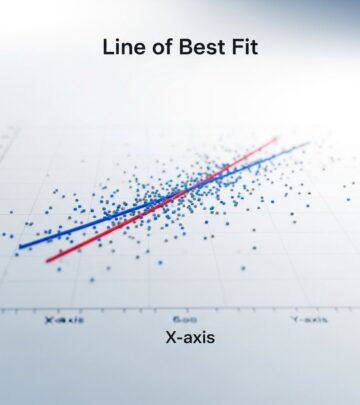

Simple linear regression is the foundation of regression analysis. It examines the relationship between exactly two variables: one independent variable (also called a predictor or explanatory variable) and one dependent variable (also called the response or outcome variable). The independent variable is used to predict or explain changes in the dependent variable.

In simple linear regression, you are trying to draw a straight line through a scatter plot of data points that best represents the relationship between the two variables. This line, known as the best-fit line or regression line, minimizes the distance between the observed data points and the predicted values. The mathematical equation for simple linear regression is:

Y = a + bX

Where Y is the dependent variable, X is the independent variable, a is the y-intercept (constant), and b is the slope of the line (the coefficient representing the relationship between X and Y). This simple equation shows that for every one-unit increase in X, Y changes by b units.

A practical example of simple linear regression would be predicting house prices based solely on square footage. Here, square footage is your independent variable (X), and house price is your dependent variable (Y). The regression analysis would determine the relationship between these two variables and allow you to predict a house’s price given its square footage.

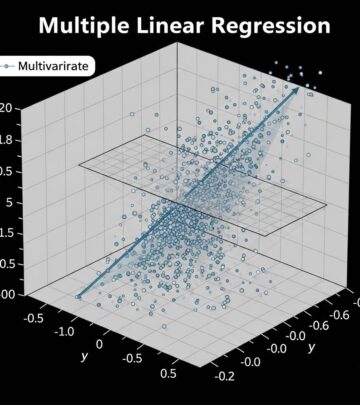

Multiple Linear Regression Explained

Multiple linear regression extends the concept of simple linear regression by incorporating two or more independent variables to predict a single dependent variable. This method acknowledges that real-world outcomes are rarely influenced by just one factor, and provides a more sophisticated approach to modeling complex relationships.

In multiple regression, you are analyzing how several independent variables work together to influence a dependent variable. The mathematical equation for multiple linear regression expands to include all predictor variables:

Ŷ = β₀ + β₁X₁ + β₂X₂ + … + βₖXₖ

Where Ŷ is the predicted value of the dependent variable, β₀ is the intercept, β₁, β₂, through βₖ are the coefficients for each independent variable, and X₁, X₂ through Xₖ are the independent variables. Each coefficient represents the change in the dependent variable for a one-unit change in that particular independent variable, holding all other variables constant.

Returning to the house price example, multiple regression would allow you to predict house prices using not just square footage, but also variables such as number of bedrooms, location, age of the house, condition, and proximity to schools. By analyzing all these factors together, you get a more comprehensive and typically more accurate prediction model.

Key Differences Between Simple and Multiple Regression

The fundamental difference between simple linear regression and multiple regression lies in the number of independent variables used in the analysis. While this may seem like a simple distinction, it has significant implications for the complexity, accuracy, and applicability of your model.

Number of Variables

Simple linear regression uses only one independent variable to predict the dependent variable, making it one-dimensional in nature. Multiple linear regression, by contrast, uses two or more independent variables, making it multidimensional. This multidimensional aspect allows multiple regression to capture more complex relationships and interactions among variables.

Complexity and Visualization

Simple linear regression can be easily visualized on a two-dimensional plot with X and Y axes. Multiple regression becomes increasingly difficult to visualize, especially when dealing with more than three independent variables. However, from a computational perspective, modern statistical software can handle dozens or even hundreds of independent variables. The trade-off is that while multiple regression provides greater analytical power, it also becomes less intuitive to interpret visually.

Model Accuracy

Multiple regression typically provides more accurate predictions than simple linear regression because it accounts for additional factors that influence the dependent variable. In many real-world scenarios, outcomes depend on multiple factors rather than just one. By incorporating these additional variables, multiple regression can explain a greater proportion of the variation in the dependent variable.

Interpretability

Simple linear regression is straightforward to interpret: if the slope is 2, then for every one-unit increase in X, Y increases by 2 units. Multiple regression is more complex to interpret because each coefficient must be understood in the context of all other variables in the model. The coefficient for one variable represents its effect on the dependent variable while holding all other variables constant—a concept known as “ceteris paribus” or “all else being equal.”

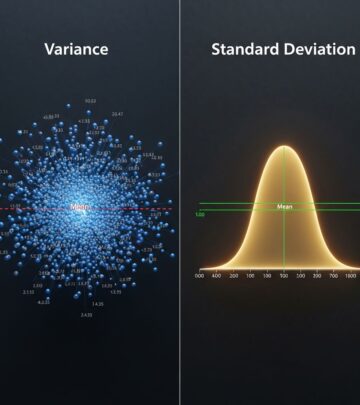

Mathematical Formulation and Assumptions

Both simple and multiple linear regression share common underlying assumptions that must be met for the models to provide reliable results. These assumptions include linearity, independence of errors, constant variance (homoscedasticity), normality of errors, and absence of autocorrelation.

For multiple regression specifically, an additional critical assumption is the absence of multicollinearity. Multicollinearity occurs when two or more independent variables are highly correlated with each other, which can inflate the variance of the coefficients and lead to unstable and unreliable predictions. This is why careful variable selection is essential in multiple regression analysis.

Both regression types assume that the dependent variable is continuous and metric (meaning it can take on any value within a range), such as salary, body size, or electricity consumption. The relationship between variables must be linear, meaning the changes in the dependent variable occur proportionally to changes in the independent variables.

Types of Multiple Regression Analysis

Multiple regression can be implemented in different ways, each with its own advantages:

Standard or Single Step Regression

In standard regression, all predictor variables enter the regression equation simultaneously. Each predictor is evaluated based on the shared variance it has with the dependent variable, considering the presence of all other predictors. This method is straightforward and provides a complete model of all variables’ relationships at once.

Sequential or Hierarchical Regression

Sequential regression enters predictors in blocks or steps, allowing researchers to examine how the model changes as variables are added. This approach is useful for testing theoretical models where variables are expected to have a hierarchical relationship or when you want to assess the incremental contribution of each variable or set of variables to the model’s predictive power.

Advantages and Limitations

Understanding the strengths and weaknesses of each approach helps in choosing the appropriate method for your analysis.

Advantages of Linear Regression

Linear regression, whether simple or multiple, offers several key advantages:

- Simplicity and interpretability: The models are relatively easy to understand and explain to non-technical audiences

- Computational efficiency: Linear regression algorithms are fast and can handle large datasets effectively

- Interpretable coefficients: Each coefficient directly shows the relationship between that variable and the outcome

- Well-established theory: Decades of research provide robust statistical foundations and best practices

Limitations of Linear Regression

Both simple and multiple regression have notable limitations:

- Linear assumption: If the relationship between variables is not linear, the model may perform poorly

- Sensitivity to multicollinearity: In multiple regression, correlated predictors can create unstable estimates

- Outlier sensitivity: Extreme values can disproportionately influence the regression line

- Limited to continuous outcomes: These methods are not suitable for categorical dependent variables

When to Use Simple vs. Multiple Regression

The choice between simple and multiple regression depends on several factors:

Use Simple Linear Regression when:

- You have a clear, single factor influencing your outcome

- Your research question focuses on the relationship between two specific variables

- You need a quick, easy-to-interpret analysis

- You have limited data or want to avoid model complexity

Use Multiple Linear Regression when:

- Multiple factors influence your dependent variable

- You want to control for confounding variables

- You need higher prediction accuracy

- Your theoretical model involves multiple predictors

- You want to understand the independent effect of each variable on the outcome

Practical Applications

Both regression methods find widespread use across industries. In finance, simple regression might analyze the relationship between market returns and interest rates, while multiple regression might predict stock prices using earnings, dividend yield, and industry factors. In real estate, simple regression could relate property values to location, while multiple regression incorporates square footage, age, condition, and local amenities. In healthcare research, simple regression might examine blood pressure changes with age, while multiple regression explores how age, weight, exercise frequency, and diet collectively affect blood pressure.

Frequently Asked Questions

Q: What is the main difference between linear regression and multiple regression?

A: The primary difference is the number of independent variables. Simple linear regression uses one independent variable to predict a dependent variable, while multiple regression uses two or more independent variables. This makes multiple regression more complex but typically more accurate in capturing real-world relationships.

Q: Can I use multiple regression with just two variables?

A: Yes, technically any regression with two or more independent variables is considered multiple regression. However, if you only have two variables total (one independent and one dependent), simple linear regression would be more appropriate and easier to interpret.

Q: How do I know if my variables meet the assumptions of linear regression?

A: You can use diagnostic plots and statistical tests to check assumptions. Scatter plots reveal linearity, residual plots show homoscedasticity, Q-Q plots indicate normality, and correlation matrices can identify multicollinearity. Many statistical software packages provide automated diagnostics.

Q: What is multicollinearity and why is it a problem?

A: Multicollinearity occurs when independent variables are highly correlated with each other. This inflates coefficient variance, making predictions unstable and making it difficult to determine each variable’s true effect on the outcome. You can address this by removing correlated variables or using techniques like principal component analysis.

Q: How many variables should I include in a multiple regression model?

A: There’s no fixed number, but you should balance model accuracy with interpretability. Including too many variables can lead to overfitting, where the model fits the training data too closely but performs poorly on new data. Use variable selection techniques and cross-validation to find the optimal number of predictors.

Q: Can linear regression predict categorical outcomes?

A: Linear regression is designed for continuous dependent variables. For categorical outcomes (like yes/no or category A/B/C), use logistic regression or other classification methods instead.

References

- Simple and Multiple Linear Regression — Numiqo (YouTube Educational Content). 2021-02-08. https://www.youtube.com/watch?v=29rjWClT_3U

- Linear Regression in Machine Learning — GeeksforGeeks. Accessed 2025. https://www.geeksforgeeks.org/machine-learning/ml-linear-regression/

- Application and Interpretation of Linear-Regression Analysis — National Center for Biotechnology Information (PMC). 2024. https://pmc.ncbi.nlm.nih.gov/articles/PMC11537238/

- Multiple Regression Explanation, Assumptions, Interpretation, and Analysis — University of Southern Queensland Pressbooks. Accessed 2025. https://usq.pressbooks.pub/statisticsforresearchstudents/chapter/multiple-regression-assumptions/

- Simple Linear Regression versus Multiple Linear Regression — FutureLearn. Accessed 2025. https://www.futurelearn.com/info/courses/introduction-to-statistics-without-maths-regressions/0/steps/426022

Read full bio of medha deb