Correlation Coefficient: Definition, Formula & Types

Master correlation coefficients: Learn how to measure relationships between variables in data analysis.

Correlation Coefficient: Understanding Data Relationships

A correlation coefficient is a quantitative measure that describes the strength and direction of a linear relationship between two variables. It is one of the most fundamental tools in statistical analysis, widely used by researchers, analysts, and financial professionals to understand how variables move in relation to one another. Whether examining the relationship between market returns and interest rates or analyzing the connection between studying hours and exam performance, correlation coefficients provide crucial insights into data patterns and dependencies.

What Is a Correlation Coefficient?

The correlation coefficient is a statistical metric that measures the degree to which two variables are linearly related. Represented by the letter “r,” this coefficient ranges from -1 to 1, with each value providing distinct information about the relationship between variables. The concept was formalized by British mathematician Karl Pearson in the late nineteenth century, establishing the foundation for modern statistical mathematics and data analysis.

In practical terms, a correlation coefficient allows analysts to quantify what might otherwise be observed qualitatively. Rather than simply noting that two variables seem to move together, the correlation coefficient provides a precise numerical value that can be compared across different datasets and time periods. This standardization makes correlation coefficients invaluable in research, finance, medicine, and numerous other fields where understanding variable relationships is essential.

Understanding Correlation Values

The interpretation of a correlation coefficient depends on understanding the range of possible values and what each represents:

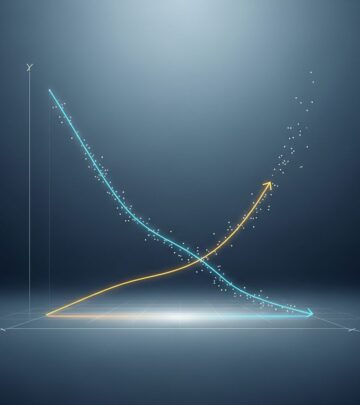

Positive Correlation

A positive correlation coefficient indicates that as one variable increases, the other tends to increase as well. Values range from 0 to 1, with the strength of the relationship increasing as the coefficient approaches 1. A coefficient of 0.30 suggests a weak positive relationship, while 0.84 indicates a much stronger positive relationship. For example, there is typically a positive correlation between advertising spending and sales revenue—companies that invest more in marketing tend to generate higher sales.

Negative Correlation

A negative correlation coefficient indicates an inverse relationship between variables, meaning that as one variable increases, the other tends to decrease. Values range from 0 to -1, with stronger relationships indicated by values closer to -1. A coefficient of -0.15 represents a weak negative correlation, while -0.65 represents a much stronger one. For instance, there is typically a negative correlation between vehicle age and resale value—older vehicles generally command lower prices.

No Correlation

A correlation coefficient of 0 indicates no linear relationship between the variables. When variables have no correlation, knowing the value of one variable provides no information about the likely value of the other. On a scatter plot, uncorrelated variables display no discernible pattern, and any line fitted to the data would be nearly horizontal.

Perfect Correlation

A coefficient of exactly 1 or -1 represents perfect correlation. Perfect positive correlation (1) means the variables move together in perfect lockstep. Perfect negative correlation (-1) means they move in exact opposite directions. In practice, perfect correlations are rare in real-world data, though they may occur in mathematical or theoretical scenarios.

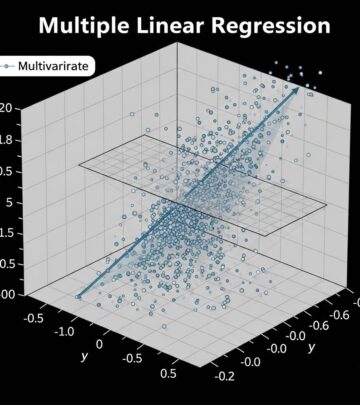

The Pearson Correlation Coefficient

The Pearson correlation coefficient, also referred to as Pearson’s r, is the most commonly used type of correlation coefficient. It measures the linear relationship between two continuous variables and was developed by Karl Pearson as a way to quantify statistical associations. The Pearson coefficient is particularly useful in linear regression analysis, where researchers aim to understand how a dependent variable changes based on one or more independent variables.

Formula and Calculation

The Pearson correlation coefficient is calculated using a mathematical formula that considers the covariance between two variables and their standard deviations. While the specific mathematical computations can be complex, most statistical software packages, spreadsheet applications, and programming languages include built-in functions to calculate Pearson’s r automatically. Understanding the formula’s purpose—measuring how pairs of data points deviate from their respective means—helps analysts interpret the results more effectively.

Applications in Analysis

The Pearson correlation coefficient is widely used in financial analysis, investment portfolio management, scientific research, and countless other fields. Financial analysts use it to measure the correlation between different stocks or asset classes, helping investors diversify their portfolios effectively. Researchers in various disciplines employ Pearson’s r to understand the strength of relationships in their data, guiding decisions about which variables warrant further investigation.

Types of Correlation Coefficients

While Pearson’s correlation coefficient is the most prominent, statisticians have developed several other types to address different data characteristics and analytical needs:

Spearman’s Rank Correlation

Spearman’s rank correlation coefficient measures the monotonic relationship between two ordinal or ranked variables. Unlike Pearson’s coefficient, which assumes a linear relationship, Spearman’s coefficient is useful when data ranks are important or when the relationship between variables is not strictly linear but follows a consistent pattern.

Kendall’s Tau

Kendall’s tau is another rank-based correlation measure that evaluates the ordinal association between two variables. It is particularly useful in situations with smaller sample sizes or when dealing with tied ranks in the data.

Point-Biserial Correlation

This correlation coefficient is used when one variable is continuous and the other is dichotomous (binary). It provides a measure of association between these two different types of variables.

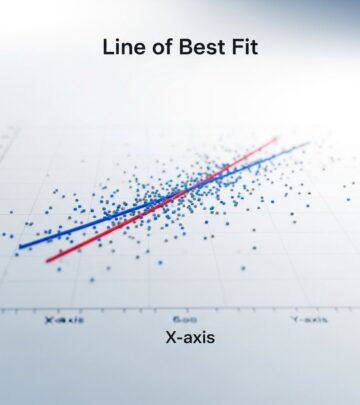

Linear Regression and Correlation

Correlation coefficients form the foundation of linear regression analysis, a statistical method that models the relationship between variables using a straight line. In linear regression, data points are plotted on a scatter graph, and a line is drawn through the center of the data distribution to represent the average relationship. The correlation coefficient quantifies how well this line describes the actual data.

Dependent and Independent Variables

In regression analysis, variables are classified as either independent or dependent. Independent variables (typically represented by “x”) are standalone data points that are not influenced by other variables in the model. Dependent variables (typically represented by “y”) change based on the values of independent variables. Understanding this distinction is crucial for proper correlation analysis and interpretation.

Practical Example

Consider a study examining the relationship between sunlight exposure and plant growth. In this scenario, sunlight exposure would be the independent variable, while plant growth would be the dependent variable. A strong positive correlation coefficient would indicate that plants receiving more sunlight tend to grow taller or more robustly. The correlation coefficient quantifies the strength of this observed relationship, allowing researchers to make predictions about plant growth based on known sunlight levels.

Interpreting Correlation Coefficients in Practice

Effective interpretation of correlation coefficients requires understanding both their magnitude and their context. A correlation of 0.70 might represent a strong relationship in social sciences but could be considered weak in physical sciences where measurements are more precise. Additionally, analysts must remember that correlation does not imply causation—a strong correlation between two variables does not mean that one causes the other.

Strength Guidelines

| Correlation Range | Relationship Strength | Example |

|---|---|---|

| 0.80 to 1.00 (or -0.80 to -1.00) | Very Strong | Height and shoe size |

| 0.60 to 0.79 (or -0.60 to -0.79) | Strong | Education level and income |

| 0.40 to 0.59 (or -0.40 to -0.59) | Moderate | Temperature and ice cream sales |

| 0.20 to 0.39 (or -0.20 to -0.39) | Weak | Social media followers and test scores |

| 0.00 to 0.19 (or -0.00 to -0.19) | Very Weak or None | Shoe size and intelligence |

Advantages and Limitations

Advantages

- Provides a single, standardized numerical value for comparing relationships across datasets

- Easy to calculate and interpret with modern statistical software

- Works effectively with continuous variables and linear relationships

- Widely understood and accepted across academic and professional disciplines

- Useful for identifying variables worthy of further investigation

Limitations

- Only captures linear relationships; nonlinear associations may be missed

- Sensitive to outliers that can skew results significantly

- Does not imply causation, potentially leading to misinterpretation

- Requires sufficient data points for reliable estimation

- May not be appropriate for all data types or distributions

Applications in Finance and Investment

In the financial industry, correlation coefficients play a critical role in portfolio management and risk assessment. Investment professionals analyze the correlation between different securities to construct diversified portfolios that reduce overall risk. Assets with low or negative correlations provide better diversification benefits because their values do not move in tandem. During market stress, correlation patterns may change, which is why analysts continuously monitor correlations alongside other metrics when making investment decisions.

Frequently Asked Questions

Q: What is the difference between correlation and causation?

A: Correlation measures whether two variables move together, while causation implies that one variable directly causes changes in another. A strong correlation does not prove causation. For example, ice cream sales and drowning deaths are positively correlated, but both are actually caused by a third factor—warm weather—rather than one causing the other.

Q: Can correlation coefficients be greater than 1 or less than -1?

A: No, correlation coefficients must fall between -1 and 1 by definition. Values outside this range would indicate a calculation error or misapplication of the formula.

Q: How many data points do I need to calculate a reliable correlation coefficient?

A: While correlation can be calculated with as few as two data points, reliable estimates typically require at least 30 observations. Larger sample sizes provide more stable and generalizable correlation estimates.

Q: Is Pearson correlation the only type of correlation coefficient I should use?

A: No, the appropriate correlation coefficient depends on your data type and relationship assumptions. Pearson’s r is best for continuous variables with linear relationships, while Spearman’s or Kendall’s tau may be more suitable for ranked or ordinal data.

Q: How do I handle outliers when calculating correlation coefficients?

A: Outliers can significantly affect correlation coefficients. Options include identifying and removing outliers if they result from measurement errors, using robust correlation methods less sensitive to outliers, or performing sensitivity analysis to understand how outliers affect your results.

Q: What does a correlation of 0 really mean?

A: A correlation of 0 indicates no linear relationship between variables. However, this does not necessarily mean the variables are completely independent; there could be a nonlinear relationship that the correlation coefficient does not capture.

References

- Pearson Correlation Coefficient (PCC) — EBSCO Research Starters. 2025. https://www.ebsco.com/research-starters/science/pearson-correlation-coefficient-pcc

- What Is the Pearson Coefficient? Definition, Benefits, and History — Investopedia. August 28, 2024. https://www.investopedia.com/terms/p/pearsoncoefficient.asp

- Statistics: Definition, Types, and Importance — Investopedia. July 30, 2024. https://www.investopedia.com/terms/s/statistics.asp

- Correlation Coefficient: Simple Definition, Formula, Easy Steps — Statistics How To. 2025. https://www.statisticshowto.com/probability-and-statistics/correlation-coefficient-formula/

Read full bio of medha deb